You are here

Using "Retrieval" To Enhance ChatGPT's Capabilities

Posted by Jim Craner on April 30, 2023

My colleagues can confirm that I'm the first person to rave about how useful ChatGPT has been for my work. I've been using it since it became available for tons of everyday tasks: writing, coding, strategizing, brainstorming, and more. But a lot of the recent hype around AI tools, especially ordinary use of the basic ChatGPT system from OpenAI, really needs to be taken with a grain of salt!

Anyone who has attended our Public Library & AI Demonstration, Discussions, and Discovery events knows that ChatGPT and other similar "Large Language Model"-based ("LLM") AI tools are great at certain language based tasks - but absolutely terrible at other tasks. The basic strengths of LLM tools fall into these categories:

- "understand" text

- "classify" text, grouping, based on sentiment or other characteristics

- "summarize" text, based on "understanding"

- "extraction" of information from text

- "transformation" of text (e.g., translation)

- "generate" text based on a prompt ("completion")

- "conversation" based on a prompt

These are incredibly useful strengths, and we're always thinking of new ways to combine them. But ChatGPT definitely has some weaknesses as well:

Knowledge Cut-off

ChatGPT, as of the time of this writing, has a knowledge cut-off of September 2021. That means that the "trainers" of AI only used information available in September 2021 to "teach" ChatGPT, and it will have limited, if any, knowledge about events that happened after that point.

Plugin Woes

ChatGPT has added plugins, which are "add-on tools" that can be installed that let the Chat application interact with other websites or services. For instance, you can enable the Kayak plugin which allows ChatGPT to search the Kayak.com flight reservation search engine on your behalf. Plugins have a ton of potential, but right now, there are only a few "approved" plugins and they're only available to a certain subset of ChatGPT users.

Hallucinations

ChatGPT knows a LOT -- after all, its training regimen included reading the majority of the public Internet. But ChatGPT also "hallucinates," which is the AI term for "just making up complete nonsense, but stating it confidently." Anyone who's used ChatGPT for a while has encountered one of these hallucinations -- and the confident nature with which ChatGPT makes these made-up statements. Hallucinations keep ChatGPT from being a reliably useful search engine, discovery layer or readers advisory resource, or many other tasks for which accuracy of information is paramount.

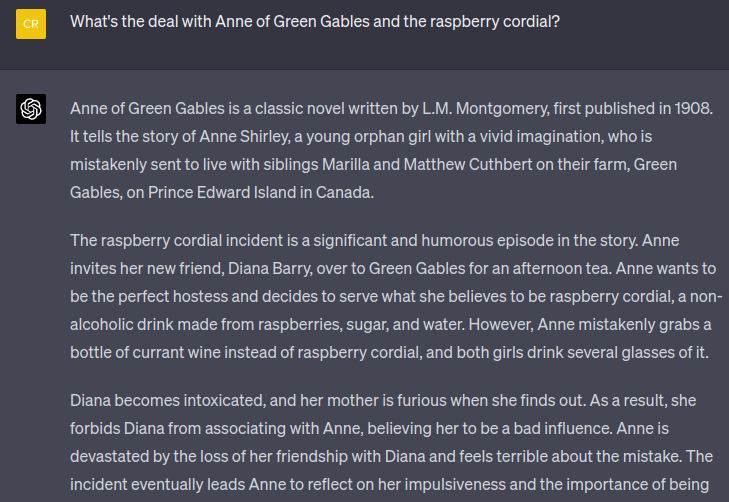

Let's look at an example of an AI making a truthful statement and a hallucination to illustrate this.

When testing AI-based tools, what we refer to as "intelligent tools," we frequently use the Project Gutenberg library of public domain books as test data. Let's look at the classic "Anne of Green Gables," by Lucy M. Montgomery for our example. Anyone who's read the book might remember a famous anecdote about the raspberry cordial: teenage Anne mistakenly serves alcoholic currant wine to her friend and herself instead of the raspberry cordial soft drink that she intended. Her friend gets tipsy and both girls get in trouble. This is a popular scene in a popular book, so naturally ChatGPT has "read about it" and can accurately share what happened.

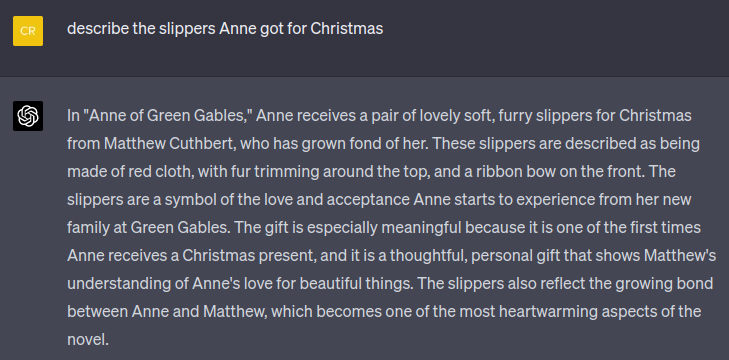

So far, so good, right? What if we ask ChatGPT about something less well-known? Like the slippers Anne got for Christmas that year?

Uh oh! This is a complete fabrication, folks. Or, in AI terms, a "hallucination." Readers of chapter 25 will remember that, in the actual book:

- the slippers come from Diana's Aunt Josephine, not from Matthew;

- the slippers are made from kid (goat skin), not furry red cloth;

- Matthew gave Anne the dress with the puffy sleeves.

Intelligent Tools to the Rescue!

So, how do we get around these problems? Like a lot of technology teams, we are hard at work building AI-enabled intelligent tools using these new AI services. We've done a lot of hard work in this area, trying to envision how library staff - and patrons - could benefit from some of these tools. And we want to make sure these tools are:

- able to access up-to-date information from after the general ChatGPT training cutoff date;

- reliable and easy to use, even for staff without ChatGPT access

- trustworthy, based on legitimate information

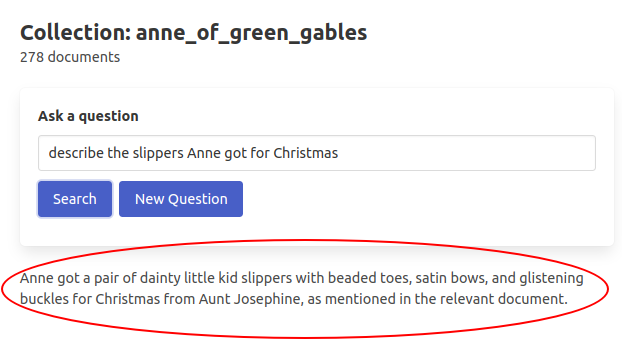

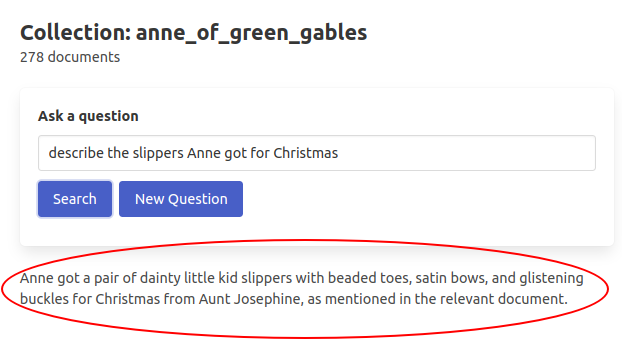

Here's a screenshot from an early version of our Document Analysis tool, displaying the correct answer to the question.

So what's the secret? Apps like this use a strategy called "retrieval" to provide relevant information to ChatGPT that it will need to answer the question. Here's a simple explanation of how it works.

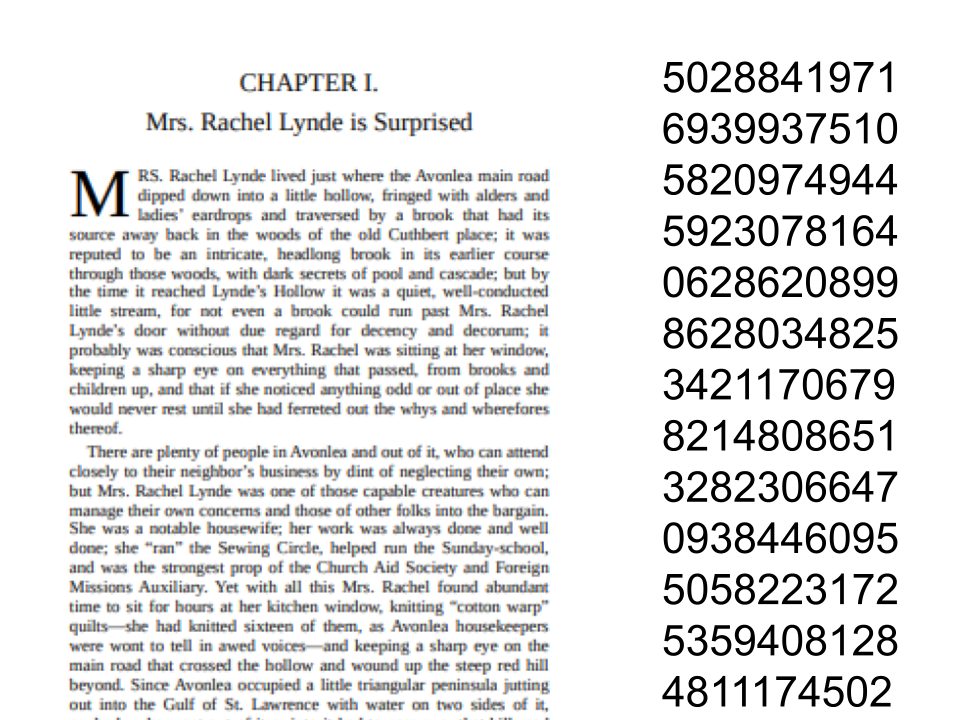

First, we upload the document into a special kind of database. AI systems can't handle "reading" (or processing) an entire book at one time so documents are split into sections, like pages.

Next, each piece of the document is analyzed and transformed into a complex series of numbers using advanced math. Modern AI systems like ChatGPT are "large language models" ("LLMs") but underneath? It's all numbers :-)

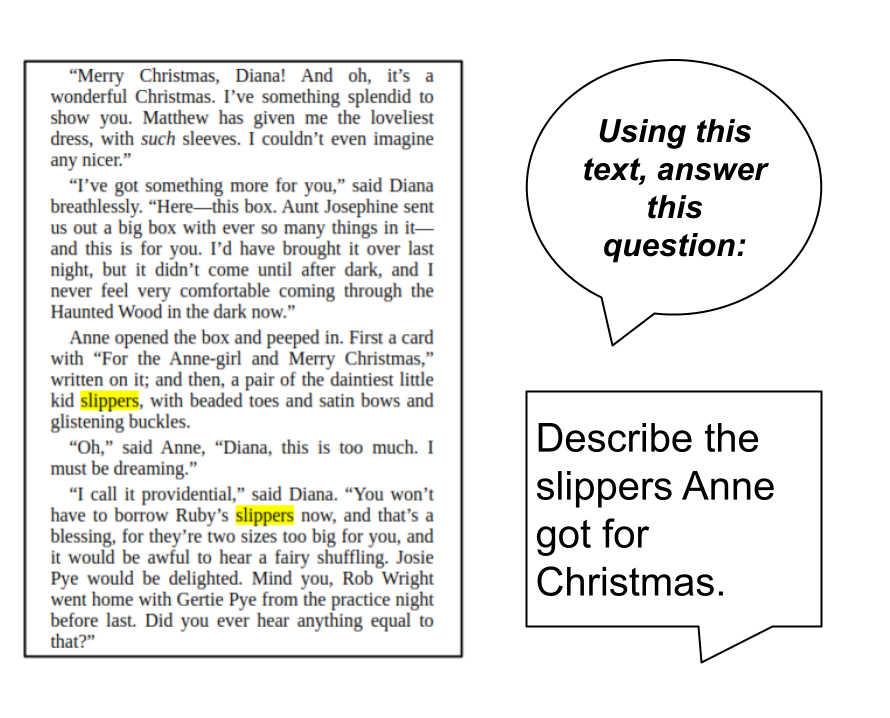

Now when you ask the system a question, your question is used to find relevant pages in the database that might answer the question. These pages, and your original question, are then given to ChatGPT. We also usually include a specific prompt, such as "Given the context of these pages, answer the following question."

ChatGPT can now correctly answer the question without hallucinating!

Are you using AI in your library or information services operations? Let us know! Interested in learning more about AI and intelligent tools? Check out our AI workshop series, beginning in May 2023!